(Un)standard Deviations: Active Learning in a UM Gateway Course

This post was authored by a winner of the 2026 U-M Provost’s Teaching Innovation Prize (TIP), sponsored by the Provost’s Office, CRLT, and the University Library. To learn more about all of the 2026 TIP winners, register for this session at the 2026 Enriching Scholarship Conference (May 6, 2:00-3:00 via Zoom).

I have been teaching undergraduate statistics since 2015, and the question I often return to is not about content—it’s about who gets to succeed. Which students leave feeling like they belong in the discipline, and which ones leave feeling like the discipline was never meant for them?

That question was front and center for STATS 250: Introduction to Statistics and Data Analysis throughout its partnership with the CRLT’s Foundational Course Initiative (FCI). STATS 250 is one of the largest courses on campus, enrolling roughly 3,500 students each academic year, with lectures seating between 400 and 500 students. About 86% of students are LSA undergraduates, but the course also serves students from Engineering, Kinesiology, Business, and Music, among other schools and colleges. The most common majors in the room on any given day span computer science, economics, biology, psychology, and neuroscience; two years after taking the course, students have declared over 166 different majors. STATS 250 is, in the most literal sense, a course for everyone.

That breadth is part of what makes it an important site for thinking about teaching techniques. Students enroll in STATS 250 with very different resources and prior experiences. For many of them, the course is one of the first places they encounter data analysis at the university level and often the moment in their educational careers where they form enduring beliefs about whether they have a future in quant-heavy programs.

For many, a 400-500 person lecture hall in which students only rarely interact with each other and only shallowly engage during class via iClickers is a powerful (read: problematic!) first impression. What happens in this course—and how it happens—matters well beyond the end of the semester. Over the past few years, our instructional team worked to redesign the course curriculum, especially the student lecture experience, to emphasize active learning teaching strategies. By doing so, we hoped STATS 250 would build community among students, promote deeper understanding of foundational ideas in statistics, and foster higher levels of student engagement.

This post is an attempt to document our team’s efforts to implement active learning strategies at scale, what has worked, what hasn’t, and what we are still figuring out.

Benefits of Active Learning

Across numerous studies and multiple meta-analyses, active learning consistently outperforms traditional lecture in undergraduate courses, including large-enrollment STEM classes. Active learning approaches are estimated to raise exam scores by ~0.5 SD and halve odds of failure in college-level STEM courses. These benefits hold across class sizes, though the effects are often larger in smaller classes. Active learning also narrows achievement gaps: a meta-analysis found gaps in exam scores for underrepresented students in STEM shrink by 33% and gaps in passing rates by 45%, especially when active learning is intensive and inclusive.

Despite these benefits, active learning strategies are rarely employed in large gateway courses. A national U.S. study using the COPUS protocol found that 55% of undergraduate STEM classes were “didactic” (>80% lecturing) and only 18% were “student-centered” (<50% lecturing). Larger classes are especially likely to be didactic; one recent study showed that the percentage of class time an instructor spends lecturing is predicted to increase for every additional student on the class roster.

(Perceived) Barriers to Implementation

Why is it that active learning strategies are so rarely implemented, especially in gateway courses often considered one of the the leakiest portions of the STEM pipeline? Part of me thinks that, because many faculty are the successful products of lecture-style teaching, they gravitate toward the types of classrooms in which they found success as students. Faculty also report being fearful that students will resist pedagogical changes, citing student complaints and poor teaching evaluations. Some research does show that students may feel they learn less in active classes even when they actually learn more, especially early in the semester, but large survey studies find actual resistance to implementing active learning strategies in classrooms is low and instructors overestimate it.

But there are logistical challenges. Because active learning often takes up more class time than traditional instruction, faculty worry that implementing new strategies will lead to reduced content coverage. Creating brand new active-learning based curricula is difficult and time-consuming alongside faculty’s preexisting teaching and research obligations and postsecondary institutions typically offer little recognition, reward, or support for instructors looking to transition their courses to an active-learning model.

Active Learning in STATS 250

These were all concerns the instructional team faced when STATS 250 began the process of transitioning to an active-learning model as part of its partnership with the CRLT’s Foundational Course Initiative. Prior to this partnership, the course followed a traditional lecture/lab model. When campus re-opened following the COVID pandemic and UM’s lecture capture system grew in popularity, in-person student attendance quickly declined. In lecture halls built to seat rosters of between 400 and 500 students, often only 100 to 150 students from the class roster would attend classes in-person; our GSIs would often teach to a lab of 5 to 10 students designed to hold 30.

Although these rates seem disappointingly low, in-person attendance rates in large lectures often sit around 50% when an instructor makes attendance voluntary. Truthfully, I can’t blame students who skip. One study showed that, when lectures are poorly constructed, student attendance is uncorrelated with exam performance. Poorly designed lectures are poor at promoting critical thinking, associated with low cognitive engagement, and provide students with few opportunities to interact with one another or class content.

Course Revision, Not Revolution

Our course redesign centered on transitioning STATS 250 from a traditional lecture-based format focused on content delivery and computation to one that offered socialized, hands-on learning and conceptual understanding. Students were provided a choice regarding how they engaged in the course and how their learning would be evaluated. This involved four key changes:

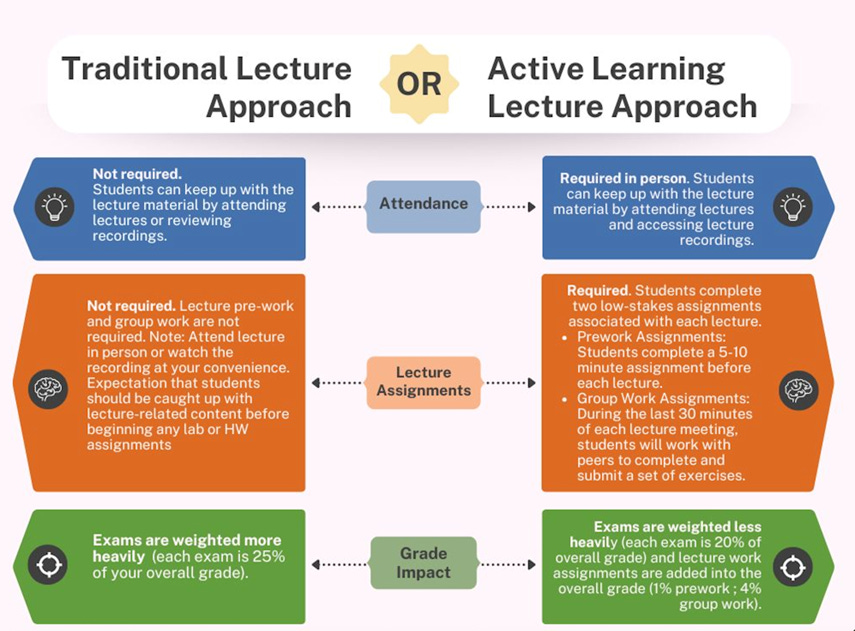

We designed a two-pathway course model in which students could select either an active learning or traditional lecture approach. In the active learning approach, students completed an in-class group activity in every lecture session. Group assignments were scored on accuracy, and exams were not as heavily weighted in the overall grade. In the traditional approach, students were not required to submit group work activities, and exams were more heavily weighted (see figure below).

The lecture time was modified to provide 45-50 minutes for direct instruction and 30 minutes of class to group work.

Instructional aides were hired to support student learning in-person during in-class activities.

The course moved to a new active learning classroom in the CCCB to accommodate group work.

Before the new format, students received feedback on their understanding approximately 20 times per semester. Students who opt into the new format receive roughly 60 instances of formative feedback across the term—a threefold increase.

Critically, participation in the active track is entirely opt-in. Students who select the “active” grading scheme earn points for completed lecture assignments, with a correspondingly lower weight on exams. Students who prefer the “traditional” scheme receive feedback on their work but are not graded on it, and their final grades depend more heavily on exams. In other words, students can still take STATS 250 as a lecture-only course if that is what suits them. What we have tried to do is make the alternative—engaged, collaborative, low-stakes practice—as attractive and accessible as possible.

Student Reactions.

When we started, we did not know how many students would actually take us up on the offer. We worried about apathy, about logistical confusion, about students arriving to lecture only to head for the exits when the active component of the class began. After all, the meta-analysis mentioned above suggests that the benefits of active learning tend to be stronger in smaller classes.

Increased engagement. Those fears did not materialize. Implementing lecture activities has had positive effects on student engagement, affective beliefs about the course, and end-of-term achievement. Before the redesign, in-person attendance at lectures hovered around 25%, a figure consistent with what many large courses experience when video recording and replay are freely available. After the redesign, daily attendance has climbed to roughly 75%.

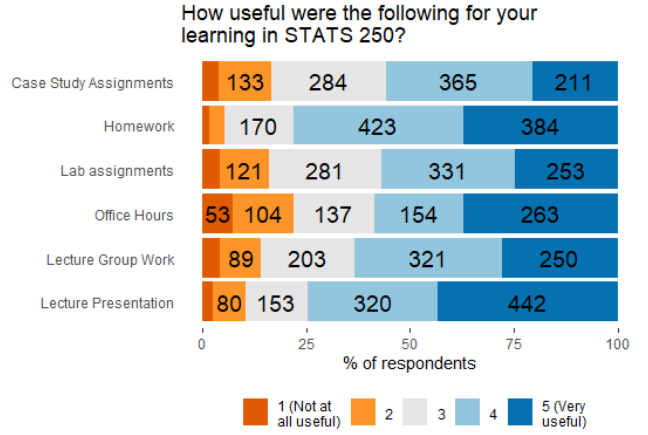

In student feedback surveys we administered toward the end of each semester, the active learning component of the course (listed in the figure below as ‘Lecture Group Work’) consistently ranked third after direct instruction and homework as being useful or very useful to a student’s learning.

Why do students perceive these group activities to be so valuable? Several themes emerge when you inspect students’ responses to open-ended questions in our feedback surveys: students value the immediacy of in-class practice, they appreciate having instructional aids on-hand to help, and they report feeling more connected to their peers than they have in other large lecture courses.

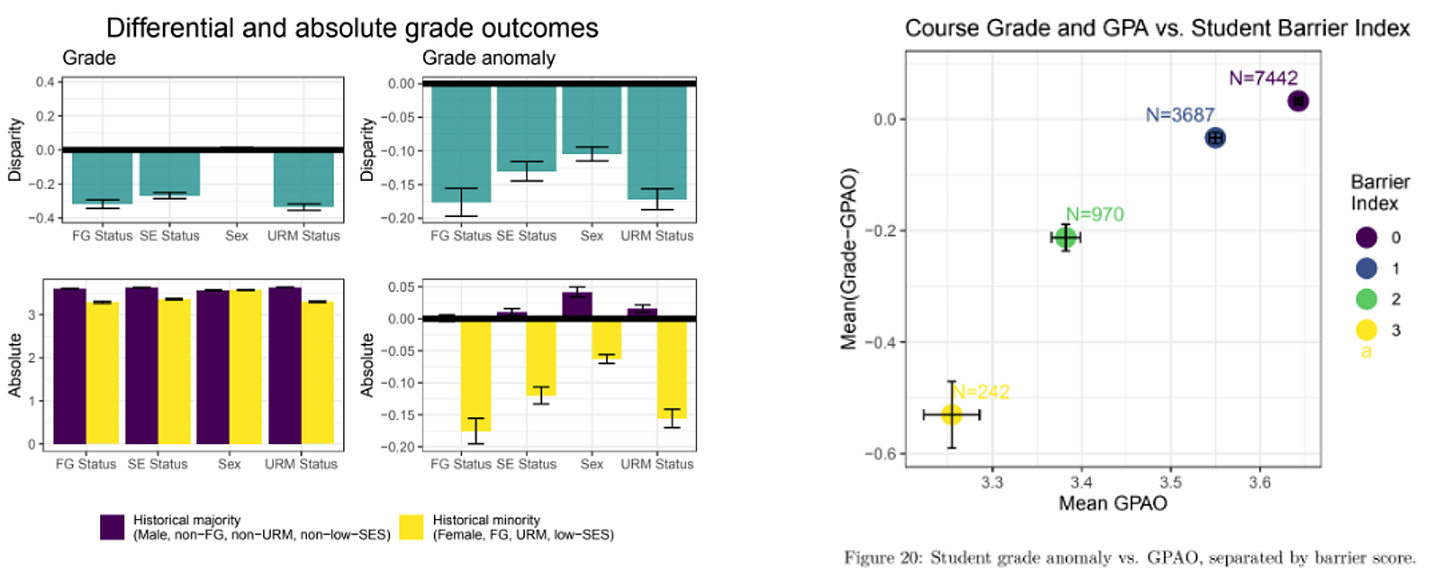

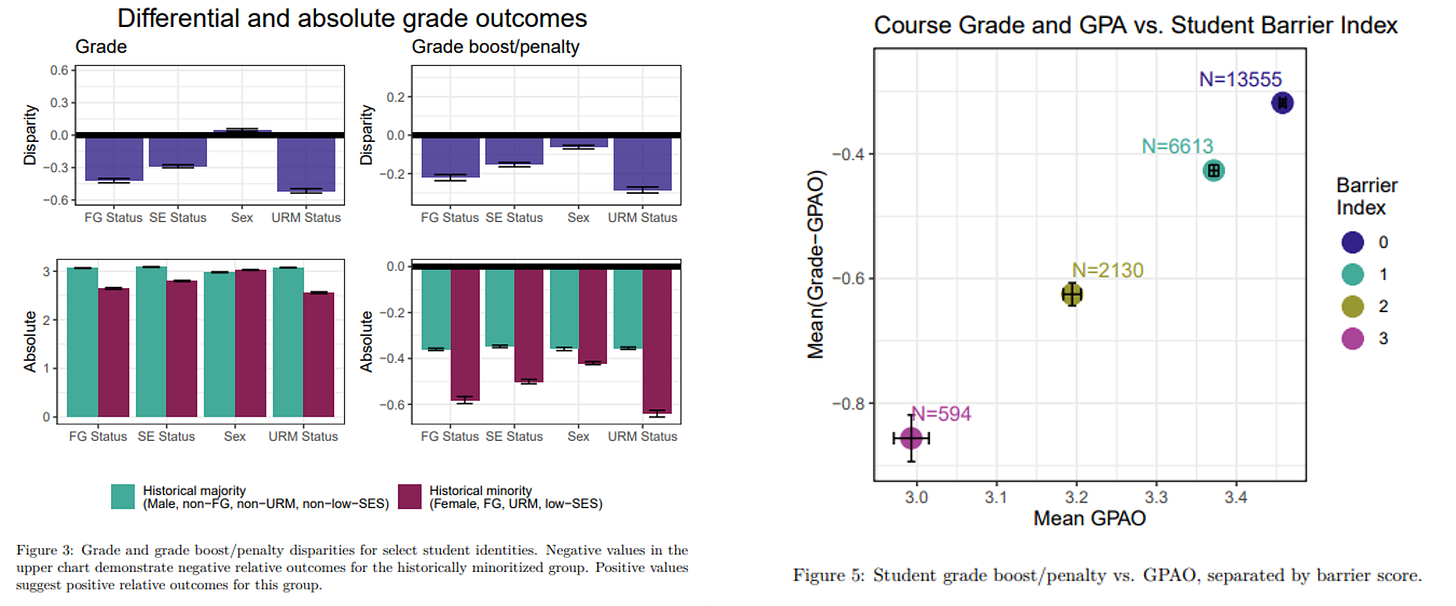

Equity in outcomes. Perhaps the finding that matters most to us is how the redesign may have influenced achievement disparities across student subgroups. Prior to the redesign, STATS 250 exhibited patterns that are depressingly common in gateway STEM courses: students from underrepresented racial minority (URM) groups, first-generation college students, low-SES students, and students who identified as female all tended to earn lower course grades than their counterparts, even after accounting for their performance in other courses. At the outset of our partnership with FCI, we explored these disparities through measures generated by the CRLT comparing students’ STATS 250 grades against their grades across other courses.

Winter 2021 (pre-implementation)

The plots on the left show the difference in Grade and Grade Anomaly (Grade in STATS 250 minus Average grade in all other courses) for students from different identity backgrounds. For example, the W21 results show that students who identified as an underrepresented racial minority (URM), on average, earned a final grade that was 0.5 GPA points lower than non-URM students. In W25, this disparity had been reduced to an average difference of ~0.3 GPA points. Similar reductions were observed by socioeconomic and first-generation college student status. These findings are consistent with prior research suggesting active learning has outsized benefits for students from historically disadvantaged groups.

Growing Pains

We would be doing a disservice to anyone considering a similar curricular overhaul if we glossed over how much has gone sideways along the way.

Creating new curriculum is an enormous, ongoing project. Every lecture activity has to be designed and calibrated so that it is neither so ‘easy’ as to be pointless nor so hard as to be demoralizing in the 30 minutes available. I have learned that I am terrible at anticipating how long an activity will take students. After each use in class, activities are revised based on faculty, IA, and GSI reflections. Using a Google Form, we ask the instructional team to note, in writing, which exercises challenged students and why. Building and maintaining this cycle of design, reflection, and revision is labor-intensive. For instructors considering a similar model, the honest advice is this: budget significantly more time for curriculum development than you think you’ll need and expect your new curriculum to need iterative refining.

We did cut topics, but we also added them. There was a widespread assumption among the instructional team, in the early planning stages, that switching to a new format that mixed active learning with direct instruction would necessarily reduce content coverage. If direct instruction time dropped from 80 minutes per class to 50, something would have to go. That assumption turned out to be partially correct: we removed inferential procedures for a difference in population proportions, inference for paired data, and removed certain graphical displays from the curriculum (e.g., quantile-comparison ‘QQ’ plots). But it also turned out to be partially wrong. Because we had to work with shortened lectures, we found ourselves designing materials that were more efficient, and we ultimately ended up with space in the calendar to add in new topics. STATS 250 now covers estimated effect sizes, statistical power, how confounding variables can influence inferences about associations, and multiple regressions with categorical predictors and interaction effects—concepts that typically cannot be covered in a 101 gateway course. The lesson we drew from this is that our prior assumption—that active learning is inherently ‘slower’ at covering topics than traditional lecture—did not hold.

This model requires a substantial instructional team. The 1:50 student-to-instructor ratio we maintain during lecture activities—roughly one GSI or IA per 50 students—does not happen by accident. It requires hiring enough IAs each semester, training them effectively, and retaining them across multiple semesters, since experienced IAs are noticeably more effective than those staffing the role for the first time. Building this pipeline has been one of the more demanding challenges of the project, and it was made harder by the fact that our GSI allocation was simultaneously reduced by approximately 30% during the period when the redesign was being implemented. Scaling an active-learning model without a proportionate investment in instructional support is likely to yield disappointing results.

Next Steps

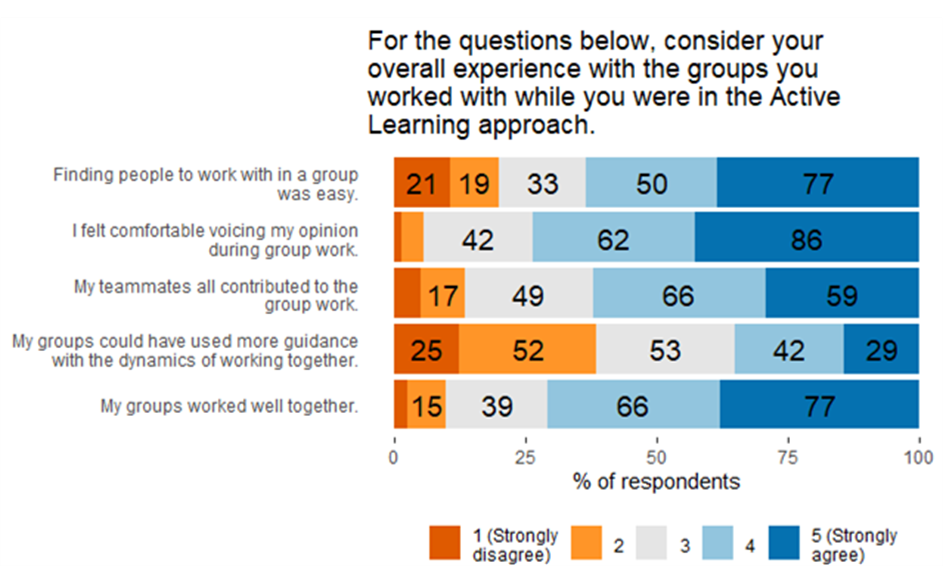

Each semester, the feedback loop between students, IAs, and the instructional team surfaces new problems to address: activities that run long, exercises that probe surface-level recall when we want conceptual understanding, group dynamics that are unproductive. In our most recent student feedback survey, over a third of responding students thought they could have used more guidance on the dynamics of working in collaborative groups.

Right now, group composition is largely left to chance—students sit next to who they sit next to. There is evidence that more intentional group formation can improve learning outcomes and increase the likelihood that students interact productively across lines of difference.

We also need to do better with accessibility. Timed, in-class assignments create real challenges for students with accommodations, and our current solutions are imperfect. As the course grows more reliant on in-class work as a driver of both engagement and learning, ensuring that students with diverse needs can participate fully becomes all the more important.

On the first day of class each semester, when we first introduce students to the course structure and inform them of the choice they’ll soon need to make about choosing the ‘active’ or ‘traditional’ approach, we sometimes ask them to turn to a neighbor and discuss an old Confucian proverb:

“What I hear, I forget. What I see, I remember. What I do, I understand.”

As proverbs go, this is almost too tidy to describe our team’s experiences with active learning. Real classrooms are messier: what students do, they sometimes do wrong; what they understand in October, they can forget by December. But the principle survives the messiness. When we look at a lecture hall where the majority of students are in their seats—bent over an exercise on regression models, arguing with a classmate about the appropriate hypothesis test to apply, getting a correction from a GSI in real time—it looks more like learning than any sage-on-stage style teaching we’ve done before.